Towards Learning Foundation Models for Heuristic Functions to Solve Pathfinding Problems

Published in GenPlan Workshop, AAAI 2025, 2025

DeepCubeAF is a reinforcement learning foundation model that learns transferable heuristic functions for pathfinding across diverse PDDL domains without relying on optimal-label supervision.

Why it matters

- Existing DRL-based heuristic learners require retraining for small domain changes, leading to high compute and time costs.

- Supervised approaches (for example, GOOSE) require optimal solution labels, which are not always obtainable for complex pathfinding domains.

- Domain-independent planners (for example, Fast Downward with FF heuristic) can struggle on complex or randomized domains.

- There is a need for a foundation model for heuristic functions that generalizes across diverse PDDL domains without assuming supervised labels.

- Training on limited domains restricts generalization; broader domain diversity may improve robustness.

What we did

- Introduced DeepCubeAF, a reinforcement learning based foundation model for learning heuristic functions across pathfinding domains.

- Used PDDLFUSE to generate diverse, randomized PDDL domains for training.

- Applied hindsight experience replay (HER) to generate additional goal states and increase training data.

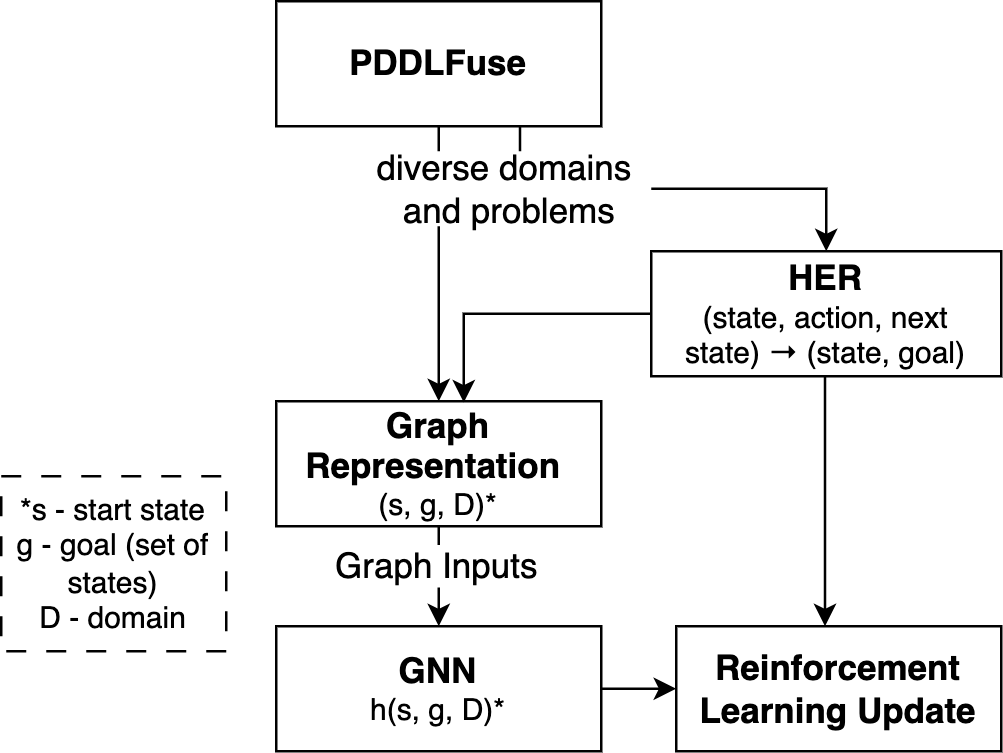

- Represented planning problems as STRIPS Learning Graphs (SLGs) and trained a 16-layer GNN heuristic via approximate value iteration.

- Trained for 1 million iterations (batch size 1000) and solved problems using batch weighted A* (batch size 100, weight 0.8).

- Achieved higher coverage than GOOSE across all eight evaluated domains and solved 60% of instances on the unseen folding domain (vs. 38% for GOOSE and Fast Downward).

How it works

- Domain generation: PDDLFUSE fuses and randomizes PDDL domains to create diverse training environments.

- Goal augmentation: Hindsight experience replay (HER) generates additional goals from intermediate states.

- Graph encoding: each (state, goal, domain) tuple is represented as a STRIPS Learning Graph (SLG).

- Heuristic learning: a 16-layer message-passing GNN is trained with approximate value iteration loss.

- Search: batch weighted A* (batch size 100, weight 0.8) uses the learned heuristic for solving.

Figure 1 illustrates how diverse domains and goals are converted into graph inputs for heuristic learning.

Key contributions

- Introduces DeepCubeAF, a reinforcement learning based foundation model for heuristic learning in pathfinding.

- Proposes enhanced PDDLFUSE with adaptive negation and planner-aligned action generation to produce solvable and diverse domains.

- Demonstrates improved generalization over supervised GNN heuristics (GOOSE) across eight domains and leave-one-out settings.

- Shows competitive or superior performance to Fast Downward (FF heuristic) on several domains and improved generalization to an unseen domain.

Recommended citation: Vedant Khandelwal, Amit Sheth, and Forest Agostinelli. (2025). "Towards Learning Foundation Models for Heuristic Functions to Solve Pathfinding Problems." GenPlan Workshop, AAAI 2025.

Download Paper | Download Slides