NeuroSymbolic Knowledge-Grounded Planning and Reasoning in Artificial Intelligence Systems

Published in IEEE Intelligent Systems, 2025

This paper presents a layered neurosymbolic approach that couples language models with symbolic reasoning and knowledge graphs to produce interpretable, constraint-aware plans.

Why it matters

- Decision-support systems in domains such as health care require multistep reasoning, constraint enforcement, and regulatory compliance.

- LLMs generate coherent language but struggle with structured search, logical verification, and protocol adherence.

- Implicit knowledge representations prevent explicit state tracking, rule enforcement, and safety validation.

- High-stakes environments require interpretable, constraint-aware, and dynamically adaptable planning, which standalone LLMs do not guarantee.

What we did

- Proposed a neurosymbolic framework integrating domain-adapted LLMs with knowledge graphs (KGs), symbolic reasoning, and constraint-aware planning.

- Used the LLM to generate initial candidate plans in natural language aligned to domain context.

- Transformed LLM outputs into structured representations compatible with KG-backed reasoning modules.

- Applied deductive reasoning for logical consistency and abductive reasoning to resolve incomplete or conflicting constraints.

- Illustrated the approach through a health-care MTSS use case involving a 16-year-old student subject to protocol and budget constraints.

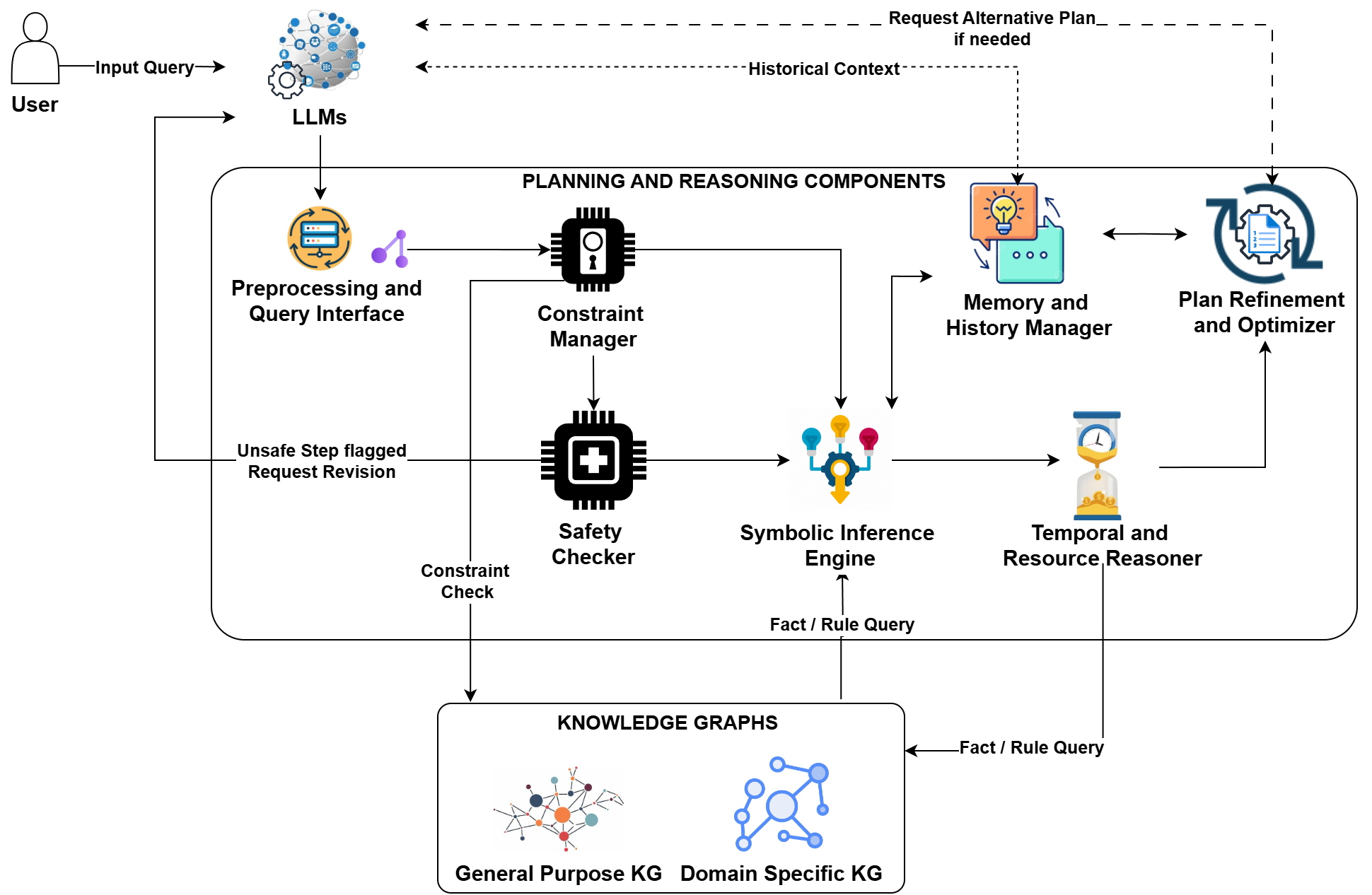

Figure 1 shows how the LLM, symbolic modules, and knowledge graphs interact across grounding, reasoning, and planning layers.

How it works

- Domain-adapted LLM interprets user queries and generates candidate plans in natural language.

- Preprocessing interface converts textual outputs into structured actions and conditions aligned with KG entities.

- Constraint manager and safety checker validate compliance with domain rules and flag violations.

- Symbolic inference engine applies deductive reasoning for verification and abductive reasoning for resolving gaps.

- Temporal and resource reasoner + optimizer ensure feasibility and iteratively refine plans using past history.

Key contributions

- Introduces a layered neurosymbolic architecture organized around knowledge grounding, reasoning integration, and dynamic planning.

- Formalizes the integration of LLM-generated plans with KG-backed constraint checking and logical verification.

- Demonstrates deductive and abductive reasoning over structured domain knowledge to resolve conflicts (for example, protocol versus budget).

- Illustrates an iterative feedback loop between LLM and symbolic modules to ensure policy-compliant, executable plans.

Recommended citation: Amit Sheth, Vedant Khandelwal, Kaushik Roy, Vishal Pallagani, and Megha Chakraborty. (2025). "NeuroSymbolic Knowledge-Grounded Planning and Reasoning in Artificial Intelligence Systems." IEEE Intelligent Systems, pp. 27-34.

Download Paper