AI-Augmented Search for Systematic Reviews: A Comparative Analysis

Published in Proceedings of the Association for Information Science and Technology (ASIS&T), 2025

This paper provides a comparative analysis of AI-augmented search methods for systematic reviews, with a focus on relevance, reproducibility, and interpretability.

Why it matters

- Systematic reviews are slow and labor-intensive (reported average 67 weeks), and search strategy errors can undermine what evidence gets included.

- This paper focuses on the searching stage, where AI-generated strategies can be hard to reproduce or inspect, making it risky to rely on them without expert oversight.

What we did

- We compared a human-in-the-loop neurosymbolic system (NeuroLit Navigator) against three commercial LLM-based tools (Scite, Consensus, Perplexity) for systematic-review-style searching.

- Across public health and computer science queries, we evaluated outputs for relevancy, reproducibility, interpretability, and controlled vocabulary usage.

- With iterative refinement enabled, NeuroLit Navigator reached a mean relevance of 65% on computer science queries while remaining reproducible and interpretable.

| System | Relevance % | Reproducibility | Interpretability | Use of Controlled Vocabulary |

|---|---|---|---|---|

| Scite | 38% | No | No | No |

| Consensus | 47% | No | No | No |

| Perplexity | 40% | No | No | No |

| NeuroLit Navigator | 65% | Yes | Yes | Yes |

In the evaluation, NeuroLit Navigator is the only tool that supports reproducible and interpretable query logic (Table 1).

How it works

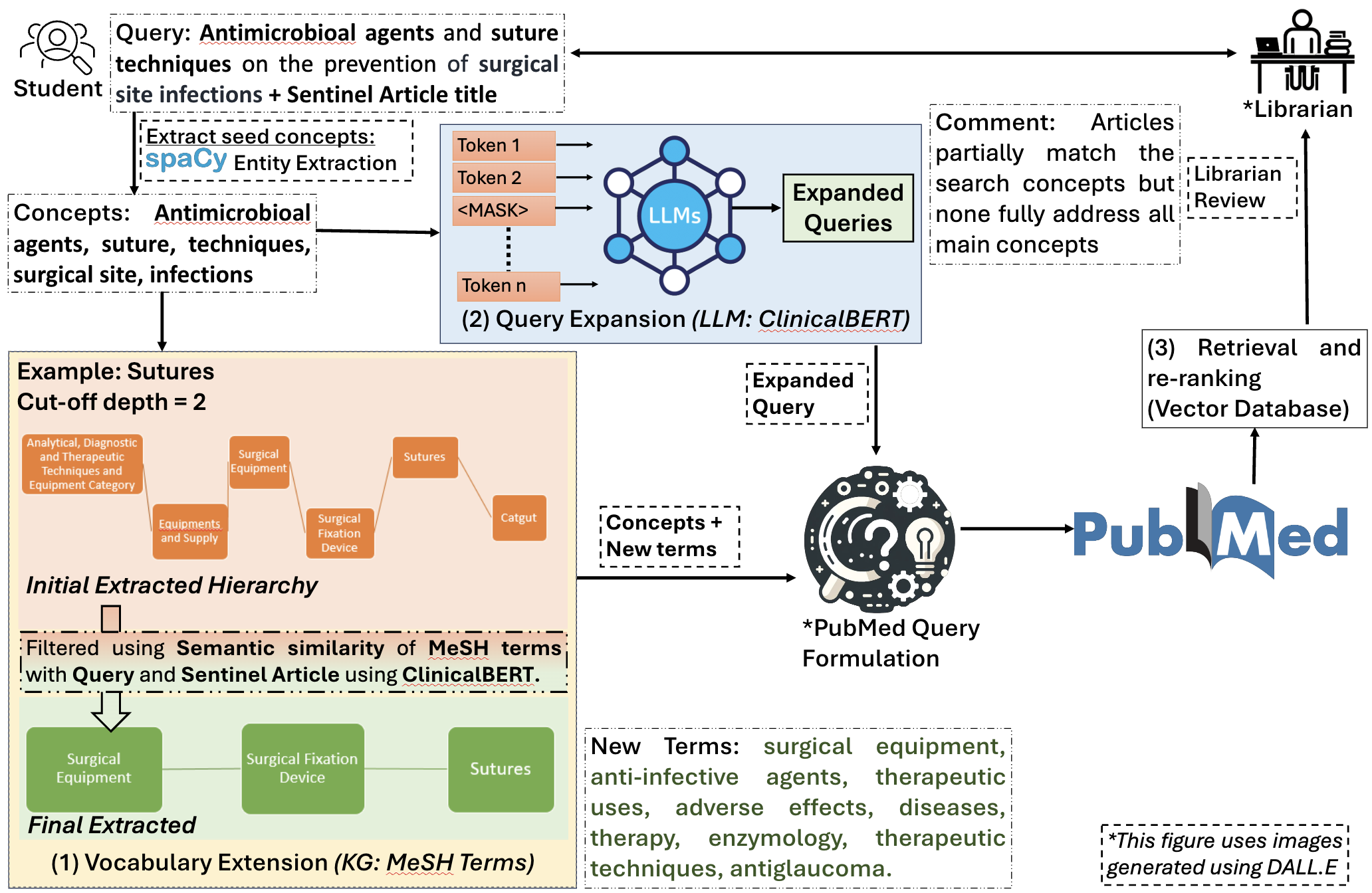

- Take a research question plus 1–2 exemplar (“gold standard”) articles from the librarian.

- Extract key entities via domain NER (e.g., SciSpacy).

- Expand terms using MeSH/UMLS (graph-based hops) and a domain LLM (e.g., ClinicalBERT) for related phrasing.

- Build a Boolean query, then retrieve from PubMed (Entrez API) and re-rank via embeddings (e.g., MPNet) with similarity scores shown to users.

The system is organized as a three-stage pipeline from entity extraction to query expansion to retrieval and re-ranking (Figure 1).

Key contributions

- An empirical comparison of NeuroLit Navigator vs. Scite/Consensus/Perplexity on systematic-review-oriented criteria (not only relevance).

- Evidence that human-in-the-loop, structured vocabulary integration supports reproducibility and interpretability that commercial systems did not expose.

- A staged neurosymbolic pipeline that explicitly combines controlled vocabularies (MeSH/UMLS) with neural components for query expansion and re-ranking.

Recommended citation: Valerie Vera*, Vedant Khandelwal*, Kaushik Roy, Ritvik Garimella, Harshul Surana, and Amit Sheth. (2025). "AI-Augmented Search for Systematic Reviews: A Comparative Analysis." Proceedings of the Association for Information Science and Technology 62, no. 1: 705-717. (* Equal contribution)

Download Paper