Explainable Pathfinding for Inscrutable Planners with Inductive Logic Programming

Published in ICAPS 2022 Workshop on Explainable AI Planning, 2022

This paper explains pathfinding behavior across an entire domain by learning a compact, human-readable macro-action graph from planner outputs using inductive logic programming.

Why it matters

- Modern planners (search, RL, neural methods) can solve pathfinding problems but cannot explain their solutions clearly.

- In many domains, human-understandable explanations are more important than optimal paths.

- Most explainable planning methods focus on explaining a single instance; this work explains all possible instances of a problem.

- Constraining solutions to an interpretable space improves trust, education, and knowledge transfer.

What we did

- Introduced the Explainable Pathfinding Graph (e-graph) to represent solutions for all states in a domain.

- Represented nodes as first-order logic programs (state sets) and edges as macro-actions.

- Used Inductive Logic Programming (Popper) to learn human-understandable preconditions from planner-generated solutions.

- Defined explanation simplicity as minimizing total macro-action cost in the graph.

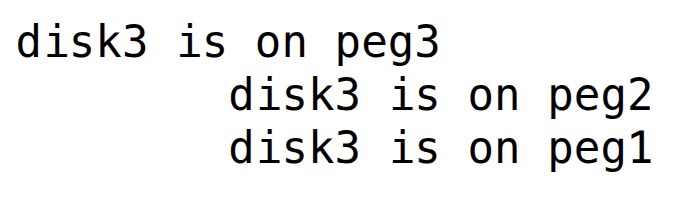

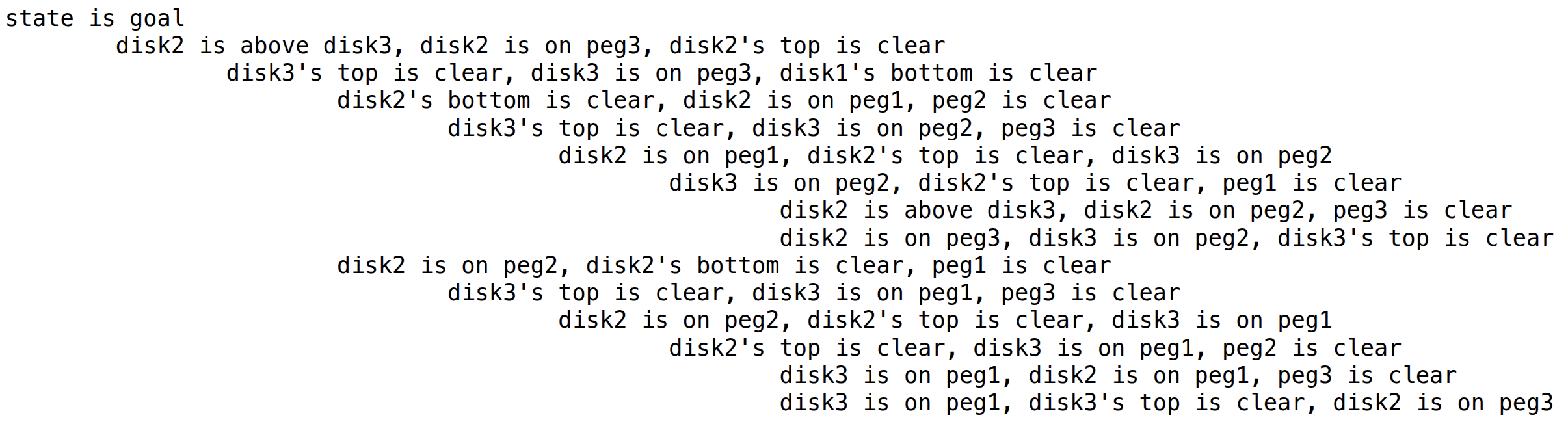

- Evaluated on 3-disk Towers of Hanoi (27 states), learning a 15-node graph, later compressed to 12 nodes, and finally to a 3-node hierarchical abstraction.

As shown in Figure 1, the explanation collapses to three high-level rules centered on the largest disk.

How it works

- Initialize goal node as a logic program representing solved states.

- Generate sample states and use a planner rho (BFS for Towers of Hanoi) to extract macro-actions.

- Apply macro-actions to unsolved states to create positive and negative examples.

- Learn first-order logic preconditions using ILP to define new graph nodes.

- Greedily minimize explanation cost, measured as the sum of macro-action lengths.

- Post-process the graph into hierarchical or if/else explanations.

The initial e-graph (Figure 2) contains 15 logic-defined state clusters connected by macro-actions.

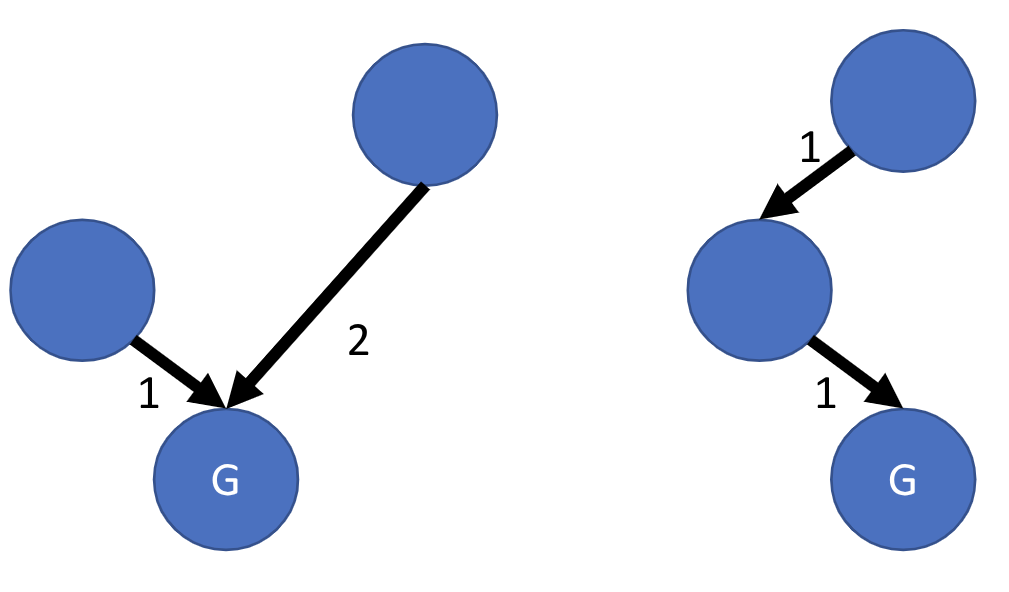

Figure 3 illustrates how compositionality reduces total explanation cost.

Key contributions

- Introduces the e-graph framework for explaining solutions to all instances of a pathfinding problem.

- Formalizes explanation simplicity as a graph cost minimization objective.

- Integrates ILP (Popper) with an arbitrary planner to induce explainable macro-level structure.

- Demonstrates hierarchical abstraction and rule-based compression on Towers of Hanoi (27-state domain).

- Discusses extension to Rubik's Cube using DeepCubeA and hindsight experience replay.

Recommended citation: Forest Agostinelli, Rojina Panta, Vedant Khandelwal, Biplav Srivastava, Bharath Chandra Muppasani, Kausik Lakkaraju, and Dezhi Wu. (2022). "Explainable Pathfinding for Inscrutable Planners with Inductive Logic Programming." ICAPS 2022 Workshop on Explainable AI Planning.

Download Paper