Demo Alleviate: Demonstrating Artificial Intelligence Enabled Virtual Assistance for Telehealth: The Mental Health Case

Published in Proceedings of the AAAI Conference on Artificial Intelligence (AAAI-23 Demo Track), 2023

Alleviate is an AI-enabled telehealth assistant for mental healthcare that couples personalized knowledge representation with medically grounded safety constraints and explainable refinement.

Why it matters

- Mental healthcare demand increased significantly following the pandemic, while clinician availability decreased.

- Existing chatbots primarily rely on scripted tasks (for example, reminders and scheduling) and lack deep personalization.

- Safe mental-health assistance requires strict adherence to medically established guidelines.

- Clinicians need AI systems that provide explainable reasoning and integrate structured medical knowledge with patient-specific data.

- Emergency-risk detection (for example, suicidal ideation) requires structured and reliable monitoring mechanisms.

What we did

- Proposed Alleviate, a mental-health chatbot designed to assist patients and clinicians.

- Represented patient information as a personalized knowledge graph integrating provider notes, chatbot interactions, and clinically validated knowledge bases.

- Enforced safety using graph and tree path constraints aligned with medical guidelines.

- Used dense representation similarity to align patient entities with external medical knowledge (for example, medication interactions).

- Incorporated explainable reinforcement learning to support feedback-based refinement.

- Included modules for safe and explainable medication reminders, appraisal of adherence to recommendations, and detection of behaviors requiring emergency human intervention.

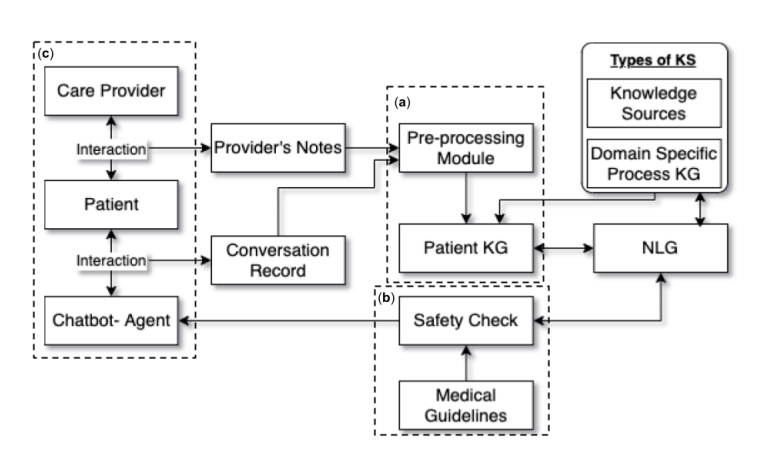

The overall architecture is illustrated in Figure 1, showing how patient data, medical knowledge, and safety constraints are integrated.

How it works

- Personalized Knowledge Graph Construction: extracts ⟨subject, predicate, object⟩ triples from provider notes and conversations to build a patient-specific graph.

- Knowledge Integration: aligns patient entities with external medical knowledge bases using dense representation similarity.

- Safety-Constrained Reasoning: enforces clinical guidelines via graph and tree path constraints.

- Explainable RL Refinement: uses reinforcement learning with clinician feedback for iterative improvement.

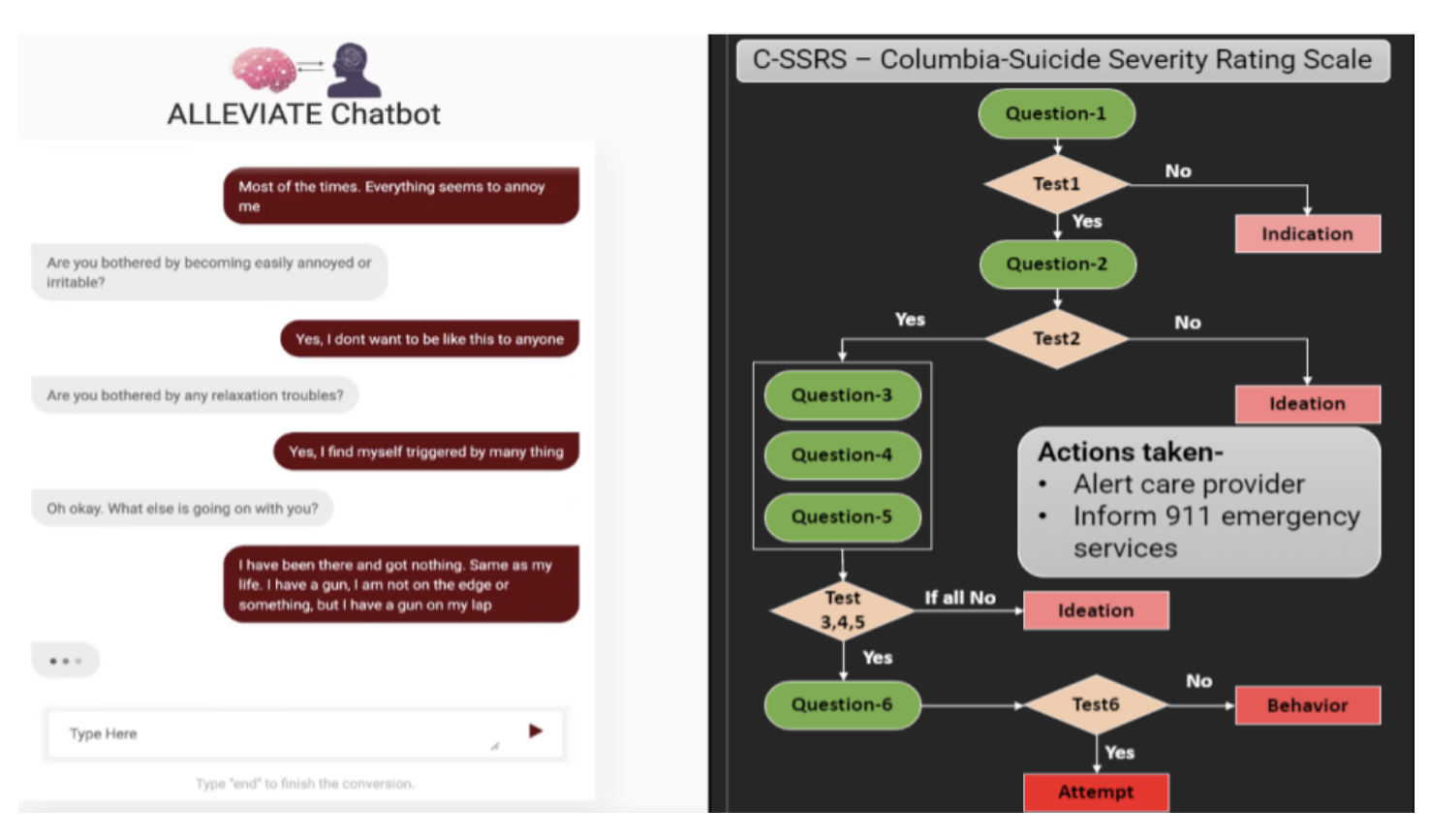

- Emergency Detection: matches conversation patterns against clinically established alarming-behavior questionnaires to trigger human intervention when necessary.

Figure 2 demonstrates how the system identifies alarming behavioral patterns and escalates appropriately.

Key contributions

- Introduces a graph-based framework integrating patient-specific data with clinically validated mental-health knowledge sources.

- Formalizes safety enforcement using graph and tree path constraints aligned with medical guidelines.

- Implements explainable reinforcement learning for feedback-based refinement.

- Demonstrates integrated modules for medication support, adherence appraisal, and emergency behavior detection.

Recommended citation: Kaushik Roy, Vedant Khandelwal, Raxit Goswami, Nathan Dolbir, Jinendra Malekar, and Amit Sheth. (2023). "Demo Alleviate: Demonstrating Artificial Intelligence Enabled Virtual Assistance for Telehealth: The Mental Health Case." Proceedings of the AAAI Conference on Artificial Intelligence 37(13): 16289-16290.

Download Paper