Specifying goals to deep neural networks with answer set programming

Published in International Conference on Automated Planning and Scheduling (ICAPS), 2024

This paper shows how logical goal specifications can condition a learned heuristic so one trained DNN can solve many goal variants without retraining.

Why it matters

- DNN-based heuristics for planning typically assume a fixed, pre-defined goal.

- Changing the goal often requires retraining or enumerating all acceptable goal states.

- Many real problems require specifying properties of a goal rather than a single exact state.

- There is no formal mechanism to express logical goal constraints directly to a trained DNN.

- In domains with many valid target states (for example, Rubik's cube patterns), enumerating all goals is computationally burdensome.

What we did

- Introduced a goal-conditioned DQN,

Q(s, a, G), that estimates cost-to-go for a set of goal states. - Represented goals as sets of ground atoms in first-order logic.

- Used Answer Set Programming (ASP) to generate stable models that define goal specifications.

- Trained with random walks (100-200 moves for Rubik's cube starts) and subsampled logical goal atoms.

- Combined the learned heuristic with weighted batched Q* search (batch size 10,000; weight 0.6).

- Demonstrated diverse Rubik's cube and Sokoban goals without retraining the DNN.

- Showed broader goal specifications can reduce solve time (for example, Cross6: 218.45 s vs canonical: 625.62 s).

How it works

- Goal-conditioned Q-learning: learn

Q(s, a, G)whereGis a set of ground atoms. - Goal generation: convert a state to logical atoms and remove subsets to create generalized goals.

- ASP specification: use stable models from an ASP program to represent goal sets.

- Model refinement: if a stable model is not a valid goal model, iteratively expand it.

- Search: use batched weighted Q* search to reach states satisfying the logical goal.

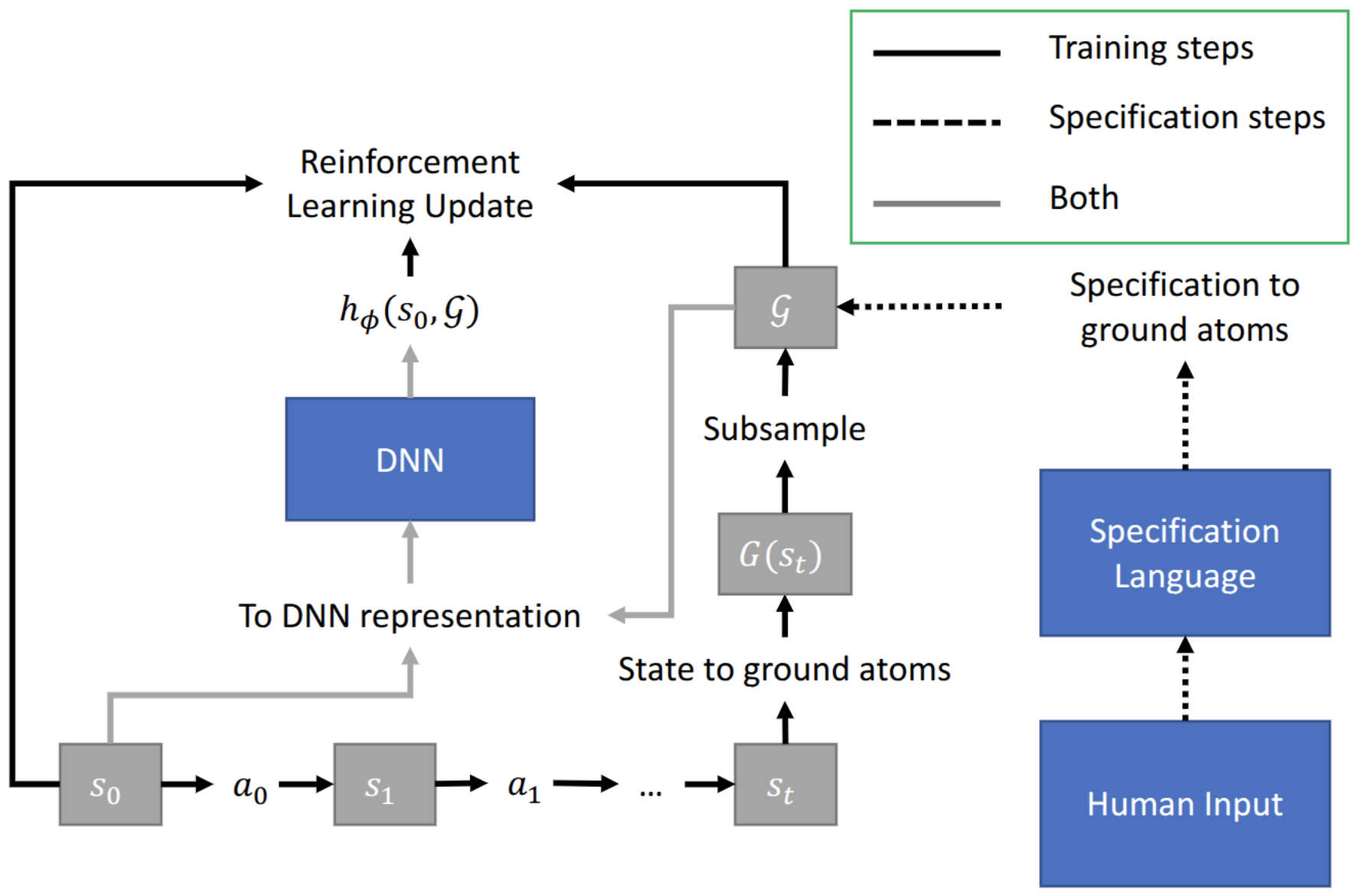

Figure 1 illustrates how logical specifications are converted into ground atoms and passed into the DQN.

Key contributions

- Formalizes goal specification to DNN heuristics using first-order logic and ASP.

- Introduces a training procedure that generalizes across unseen goals without retraining.

- Demonstrates diverse Rubik's cube goals (for example, Cross6, CupSpot, Checkers) and Sokoban goals.

- Empirically shows that broader goal sets can reduce solve time and path cost (for example, Cross6 vs canonical).

Recommended citation: Forest Agostinelli, Rojina Panta, and Vedant Khandelwal. (2024). "Specifying goals to deep neural networks with answer set programming." Proceedings of the ICAPS, vol. 34, pp. 2-10.

Download Paper